| This is documentation for Semarchy xDM 5.3 or earlier, which is no longer supported. For more information, see our Global Support and Maintenance Policy. |

Data certification

This page explains the jobs and the process involved in certifying data published into the hub.

Integration job

The integration job processes data submitted by an external load and runs this data through the certification process. This process is a series of steps to create and certify golden data from source data.

This job is automatically generated using the data quality rules that you define in the model. It uses the tables created in the data hub when you deploy a model edition.

| Although a good understanding of the process is not required to publish source data or consume golden data, it is necessary to drill down into the various intermediate structures (e.g., to review the rejects or the duplicate records detected by the integration job for a given golden record). |

An integration job is a sequence of tasks used to certify golden data for a group of entities. The model edition deployed in the data location brings several integration job definitions with it. Each of these job definitions is designed to certify data for a group of entities.

Integration job definitions, as well as integration job logs, are stored in the repository.

For example, a multi-domain hub contains entities for the PARTY and PRODUCTS domains, and has two integration job definitions:

-

INTEGRATE_CUSTOMERS certifies data for the entities Party, Location, etc.

-

INTEGRATE_PRODUCTS certifies data for the entities Brand, Product, Part, etc.

Integration jobs run when source data has been loaded in the landing tables and is submitted for golden data certification.

Each integration job is the implementation of the overall certification process template. It may contain all or some of the steps of this process.

The certification process

The certification process creates consolidated and certified golden records from various sources:

-

Source records, pushed into the hub by middleware systems on behalf of upstream applications (known as publishers).

Depending on the type of entity, these records are either converted to golden records directly (basic entities) or matched and consolidated into golden records (ID- and fuzzy-matched entities). When matched and consolidated, these records are referred to as master records. The golden records they have contributed to creating are referred to as master-based golden records. -

Source authoring records, authored by users in the MDM applications.

When users author data in an MDM application, depending on the entity type and the application design, they perform one of the following operations:-

They create new golden records or update existing golden records that exist only within the hub, not with any of the publishers. These records are referred to as data-entry-based golden records. This pattern is allowed for all entities, but basic entities support only this pattern.

-

They create or update master records on behalf of publishers, submitting these records to matching and consolidation. This pattern is allowed only for ID- and fuzzy-matched entities.

-

They override golden values resulting from the consolidation of records pushed by publishers. This pattern is allowed only for ID- and fuzzy-matched entities.

-

-

Delete operations, made by users on golden and master records from entities with delete enabled.

-

Matching decisions, taken by data stewards for fuzzy-matched entities, using duplicate managers. Such decisions include confirming, merging, or splitting groups of matching records as well as accepting/rejecting suggestions.

The certification process takes these various sources and applies the rules and constraints defined in the model to create, update, or delete golden data that business users browse using the MDM applications and that downstream applications consume from the hub.

This process is automated and involves several steps, automatically generated from the rules and constraints, which are defined in the model based on the functional knowledge of the entities and the publishers involved.

The following sections describe the details of the certification process for ID-, fuzzy-matched and basic entities, as well as the deletion process for all entities.

Certification process for ID- and fuzzy-matched entities

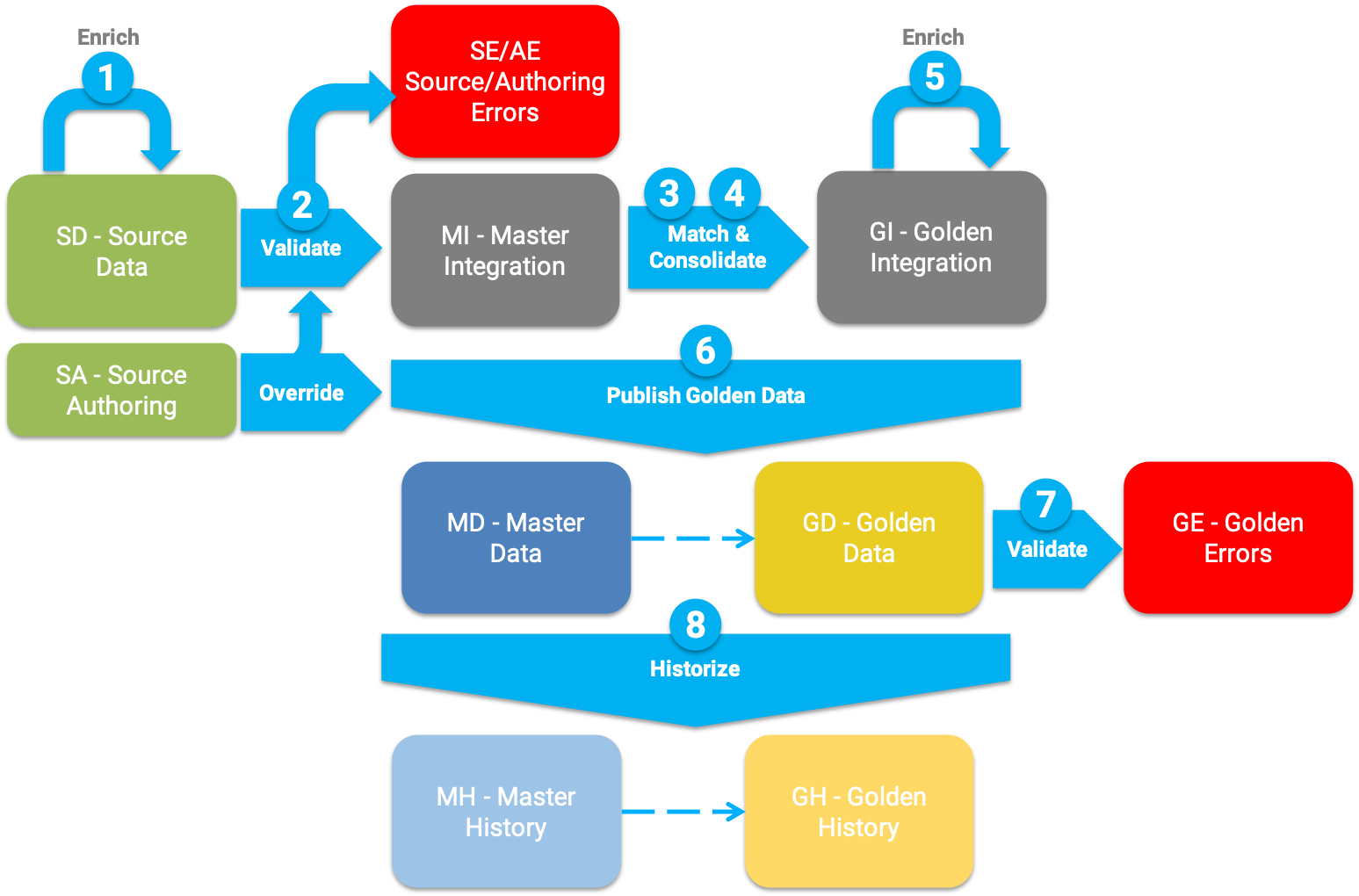

The following figure describes the certification process for ID- and fuzzy-matched entities, as well as the tables involved in this process.

The certification process involves the following steps:

-

Enrich and standardize source data: source authoring records (in the

SAtables) created or updated on behalf of publishers and source records (in theSDtables) are enriched and standardized using the SemQL and API (Java plugin or REST client) enrichers, executed pre-consolidation. -

Validate source data: the enriched and standardized records are checked against the various constraints executed pre-consolidation. Erroneous records are ignored for the rest of the processing and the errors are logged into the

SE(source errors) andAE(authoring errors) tables.

NOTE: Source authoring records are enriched and validated only for basic entities. For ID- and fuzzy-matched entities, source authoring records are not enriched and validated. -

Match and find duplicate records: for fuzzy-matched entities, this step matches pairs of records using a matcher and creates groups of matching records (match groups). For ID matched entities, matching is simply made on the ID value.

The matcher works as follows:-

It runs a set of match rules. Each rule has two phases: first, a binning phase creates small bins of records. Then a matching phase compares each pair of records within these small bins to detect duplicate records.

-

Each match rule has a match score that expresses how strongly the pair of records matches. A pair of records that match according to one or more rules is given the highest match score of all these rules. Match pairs with scores and rules are stored in the

DUtable. -

When a match group is created, a confidence score is computed for that group. According to this score, the group is marked as a suggestion or immediately merged, and possibly confirmed. These automated actions are configured in the merge policy and auto-confirm policy of the matcher.

-

Matching decisions taken by users on match groups (stored in the

UMtable) are applied at that point, superseding the matcher’s choices.

-

-

Consolidate data: this step consolidates match group duplicates into single consolidated records. The consolidation rules created in the survivorship rules define how the attributes consolidate. Integration master records and integration golden (consolidated) records are stored at that stage in the

GIandMItables. -

Enrich consolidated data: the SemQL and API (Java plugin or REST client) enrichers executed post-consolidation run to standardize or add data to the consolidated records.

-

Publish certified golden data: this step finally publishes the golden records for consumption. The final master and golden records are stored in the

GDandMDtables.-

This step applies possible overrides from source authoring records (involving the

SA,SF,GAandGFtables), according to the override rules defined as part of the survivorship rules. -

This step also creates or updates data-entry-based golden records (that exist only in the MDM system), from source authoring records.

-

-

Validate golden data: the quality of the golden records is checked against the various constraints executed on golden records (post-consolidation). Note that, unlike the pre-consolidation validation, it does not remove erroneous golden records from the flow, but flags them as erroneous. The errors are also logged (in the

GEtables). -

Historize data: golden- and master-data changes are added to their history (stored in the

GHandMHtables) if historization is enabled.

| Source authoring records are not enriched or validated for ID- and fuzzy-matched entities as part of the certification process. These records should be enriched and validated as part of the steppers into which users author the data. |

Certification process for basic entities

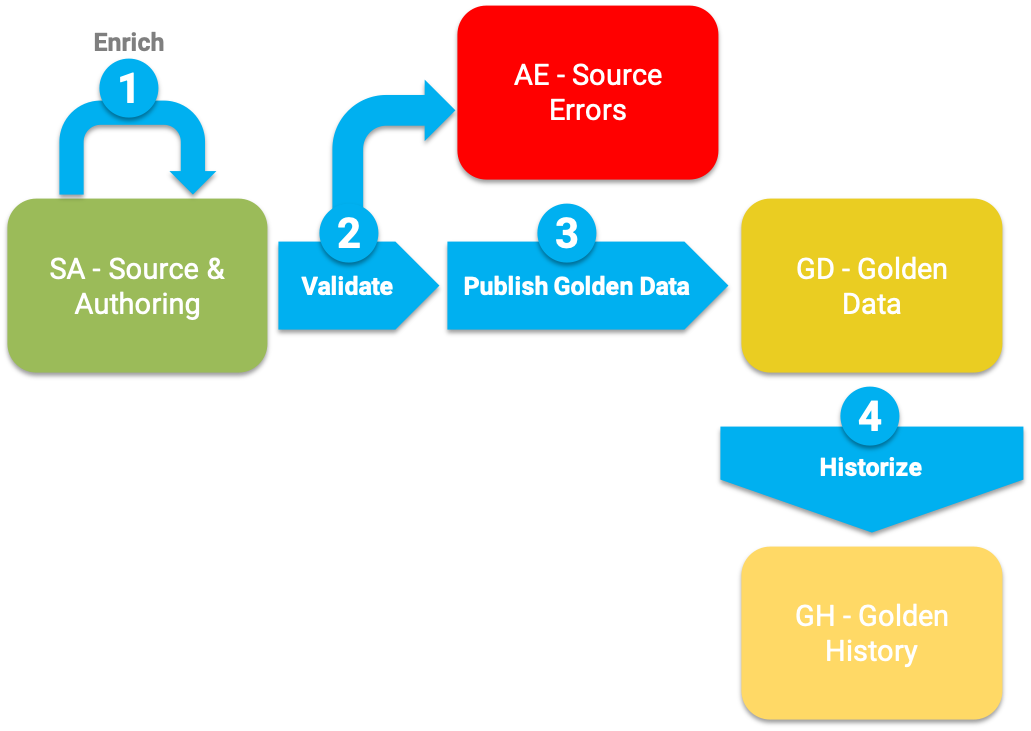

The following figure describes the certification process for basic entities as well as the tables involved in this process.

The certification process involves the following steps:

-

Enrich and standardize source data: during this step, the source records and source authoring records (both stored in the

SAtables) are enriched and standardized using SemQL and API (Java plugin and REST client) enrichers executed pre-consolidation. -

Validate source data: the quality of the enriched source data is checked against the various constraints executed pre-consolidation. Erroneous records are ignored for the rest of the processing and the errors are logged (in the

AEtables). -

Publish certified golden data: this step finally publishes the golden records for consumption (in the

GDtables). -

Historize data: golden data changes are added to their history (stored in the

GHtable) if historization is enabled.

|

Deletion process

A delete operation (for basic, ID-, or fuzzy-matched entities) on a golden record involves the following steps:

-

Propagate through cascade: extends the deletion to the child records directly or indirectly related to the deleted ones with a cascade configuration for deletion propagation.

-

Propagate through nullify: nullifies child records related to the deleted ones with a nullify configuration for the deletion propagation.

-

Compute restrictions: cancel deletion of records having related child records and a restricted configuration for deletion propagation. If restrictions are found, the entire delete action is canceled as a whole.

-

Propagate deletion to owned master records: propagates deletion to the master records attached to deleted golden records. This step only applies to ID- and fuzzy-matched entities.

-

Publish deletion: tracks record deletion (in the

GXandMXtables), with the record values for soft delete actions only, and then removes the records from the golden and master data (in theGDandMDtables). Soft delete does not remove data from theSD,SA,MH, orGHtables.

When doing a hard delete, this step deletes any trace of the records in every table (SA,SD,UM,MH,GH, etc.). The only trace of a hard delete is the ID (without data) of the deleted master and golden records, in theGXandMXtables.

Deletes are tracked in the history for golden and master records (in theMHandGHtables), if historization is configured.

| It is not necessary to configure in the job all the related entities that may be deleted by cascade. The job generation automatically detects the entities that must be included for deletion based on the entities managed by the job. |

For more information about the model rules and artifacts involved in the generation of this process, see The data certification process.